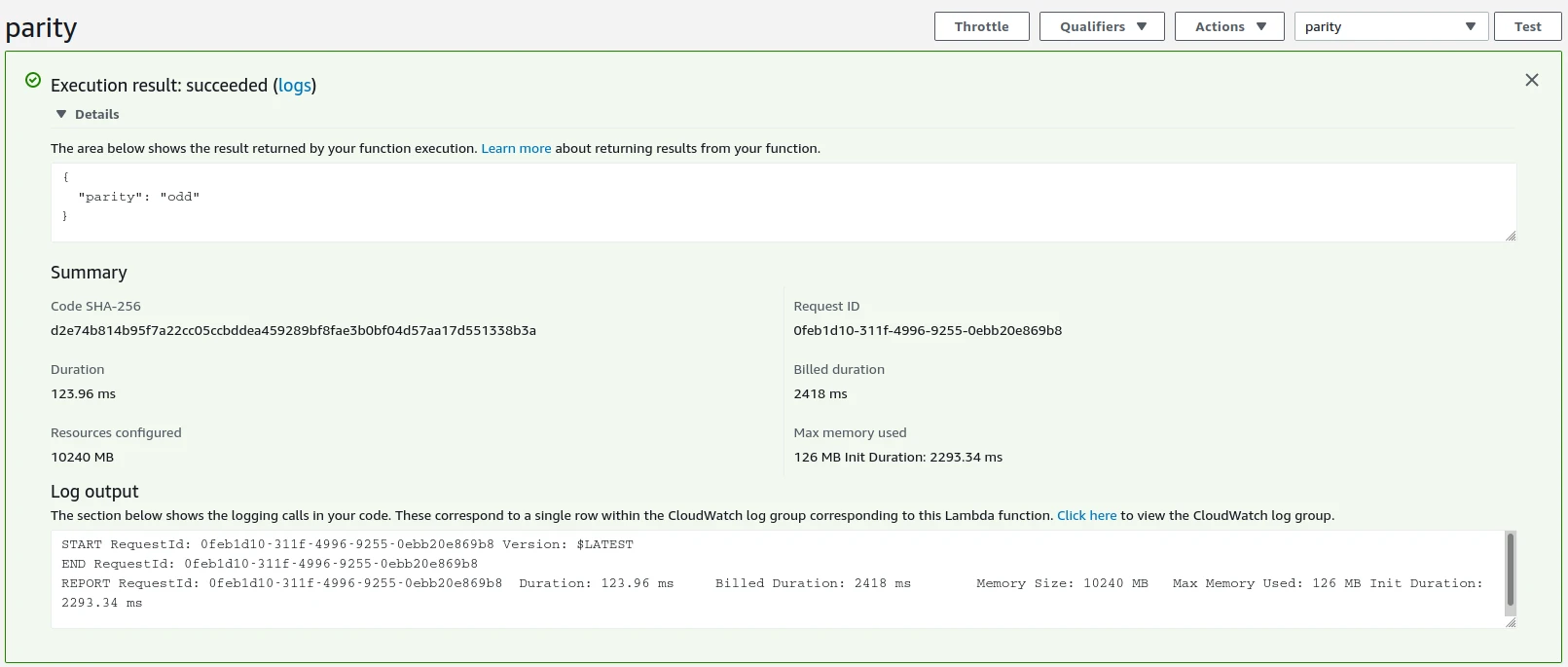

Serverless, On-Demand, Parametrised R Markdown Reports with AWS Lambda

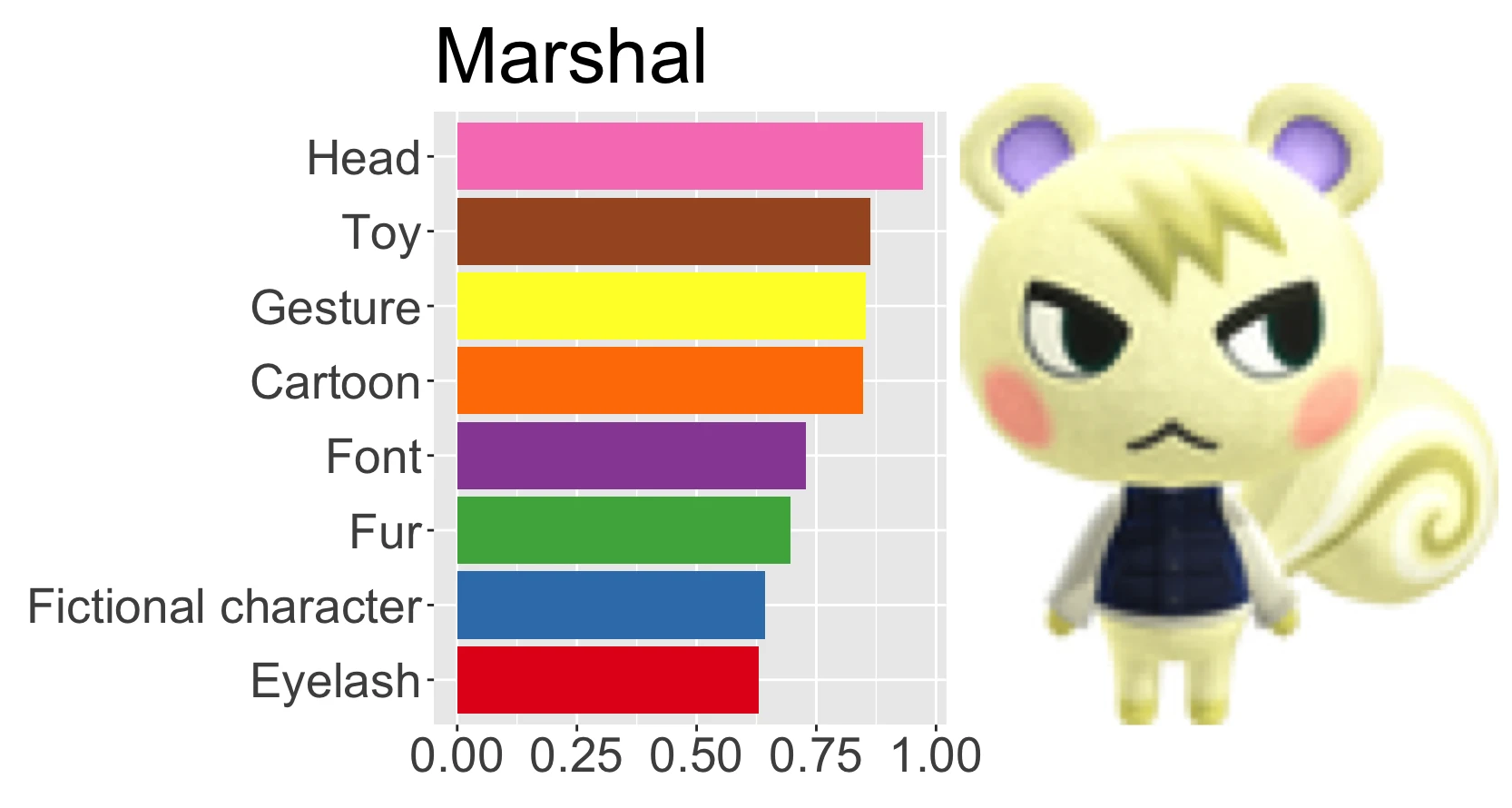

I have a URL with a colour parameter, like "<https://example.com/diamonds?colour=H>". When I go to this URL in my browser, an AWS Lambda instance takes that parameter and passes it to rmarkdown::render